We’ve audited a lot of LinkedIn campaigns. And the most common reason a good campaign gets killed? Someone looked at conversions after two weeks and pulled the plug.

LinkedIn doesn’t work that way. People aren’t logging in to buy software. They’re scrolling between meetings, reading posts, and noticing brands over time. Your ad might influence a deal that shows up weeks later through organic search, direct traffic, or a sales call — and get zero credit for it.

Add in long B2B buying cycles and multiple decision-makers, and early conversion numbers get even more misleading.

Measuring LinkedIn ads properly means looking at influence, not just immediate actions. Are you reaching the right accounts? Are you building demand? Is pipeline starting to move?

That’s the difference between pausing campaigns too early and scaling the ones that actually drive revenue.

Why Measuring LinkedIn Ads Performance Based on Conversions Alone Fails

If you treat LinkedIn like a direct response channel, the numbers will almost always look bad.

Start with CTR. Teams see a 0.4% click-through rate and assume the campaign is failing. But on LinkedIn, most of the impact happens without the click.

People see your ad a few times while scrolling. They recognize the brand. The message sticks. Weeks later, they Google your company, respond to an email, or ask a peer about you. The deal shows up as organic or direct — even though LinkedIn created the awareness.

This is why view-through and delayed conversions are so common on the platform. LinkedIn is built for passive consumption, not impulse purchases.

Then you layer in cross-device and cross-channel behavior. Someone sees your ad on their phone, visits your site from a laptop, and converts after a sales call. The platform can’t connect all of that cleanly.

So the dashboard ends up doing two things at once: over-crediting some conversions and under-crediting the real influence of your campaigns.

Conversions alone are a dangerous way to judge LinkedIn performance. They’re one piece of a much bigger story.

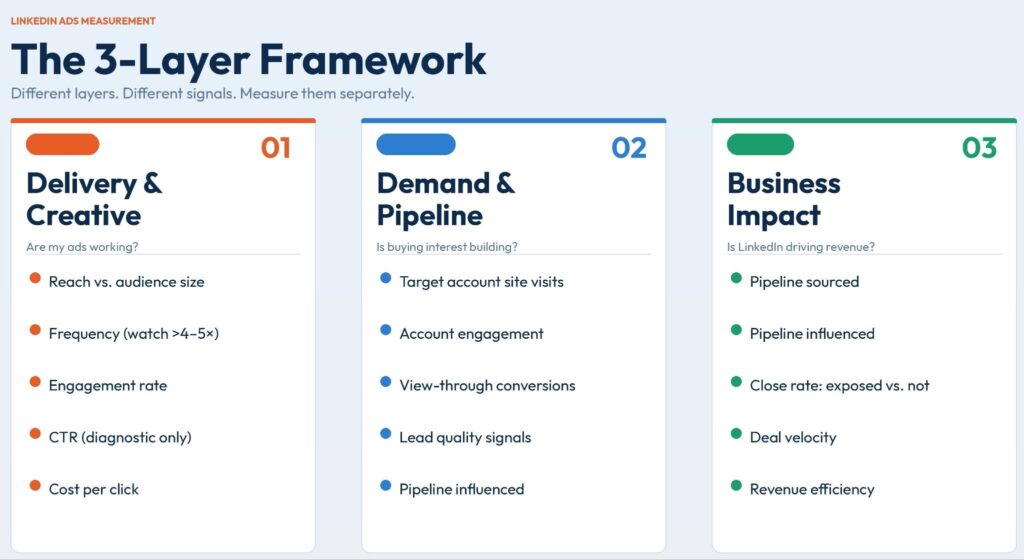

The 3 Layers of LinkedIn Ads Performance You Need to Measure

Here’s how we think about it. LinkedIn performance doesn’t live in a single column — it happens in layers. You have early signals that tell you if the ads are working, mid-funnel signals that show real buying interest, and late-stage signals that prove business impact.

Mix those layers together and you make bad decisions. Separate them, and the numbers start to make sense.

Layer 1 – Delivery & Creative Performance (Early Signals)

This layer answers one simple question: are my ads working at all?

Not ‘are they driving revenue.’ Not ‘is the CPL good.’ Just the basics — are we reaching the right people, and are they responding to the creative?

Start with reach versus audience size. If you’re targeting 50,000 people and only reaching 4,000 after two weeks, the campaign isn’t penetrating the audience. Either the budget is too low, the bids are too conservative, or the targeting is too narrow.

On the flip side, if frequency is climbing fast and engagement is dropping, that’s creative fatigue. People have seen the same ad too many times — time to rotate in something fresh.

Then look at engagement: reactions, comments, shares, saves. These aren’t vanity metrics — they’re early indicators of relevance. If the right people are interacting with your ads, you’re probably on the right track.

CTR fits here too, but treat it as a diagnostic, not a success metric. Extremely low CTR means the creative or offer probably isn’t resonating. Strong CTR is a good sign. But high CTR alone does not mean the campaign is driving pipeline — and low CTR doesn’t automatically mean it’s failing.

Layer 1 metrics are useful for diagnosing creative issues, spotting targeting problems, understanding audience penetration, and deciding when to refresh ads. They are not useful for judging ROI, deciding whether LinkedIn ‘works,’ or making big budget decisions after two weeks.

Think of Layer 1 as your health check. It tells you if the campaign is alive and moving in the right direction.

Layer 2 – Demand & Pipeline Signals (Mid-Funnel Impact)

Once the campaign has been running long enough, you move into the second layer. This one answers a different question: is LinkedIn creating real buying interest?

Lead volume is the first place most teams look, but it needs context. Ten leads from junior roles at small companies is not the same as five leads from directors at enterprise accounts. Lead quality matters more than raw lead count. Look at job seniority, job function, company size, and whether they match your target account lists.

Next, track MQL and SQL progression. Are LinkedIn leads actually moving forward? Are they turning into meetings and real conversations with sales? If leads are coming in but not progressing, the issue could be targeting, messaging, offer — or even the sales follow-up process.

Matched audience engagement is one of my favorite signals here. Upload your target account list and watch how those companies interact with your ads. If your target accounts are consistently engaging — even without immediate conversions — LinkedIn is doing its job. It’s building awareness inside the accounts that matter most.

Self-reported attribution adds another layer of truth. Add a simple field to your forms: ‘How did you hear about us?’ You’ll be surprised how often people mention LinkedIn, even when the platform gets zero credit in the dashboard. It’s one of the simplest reality checks you can run.

Layer 3 – Business Impact Signals (Revenue & ROI)

This is the layer leadership actually cares about. It answers the big question: is LinkedIn worth scaling?

At this stage, you’re no longer focused on clicks, engagement, or even just leads. You’re looking at how LinkedIn affects real revenue outcomes.

Start with pipeline influenced versus pipeline sourced. Pipeline sourced means the opportunity came directly from a LinkedIn conversion. Pipeline influenced includes deals where the account was exposed to your ads at some point during the buying journey. On LinkedIn, influenced pipeline often tells a more accurate story than sourced pipeline alone.

Next, look at deal velocity and close rates. Are accounts that saw LinkedIn ads moving through the pipeline faster? Closing at higher rates? If yes, LinkedIn is shaping perception and trust before the sales conversation even starts.

And here’s where a lot of teams get tripped up: don’t obsess over platform ROAS in B2B. It’s almost always misleading. Look instead at broader metrics — total marketing spend versus total pipeline or revenue. That gives you a much clearer picture of whether LinkedIn is actually pulling its weight.

You also don’t need perfect attribution to make good decisions. Attribution in B2B will always be messy. Multiple touchpoints, multiple people, multiple channels. The goal is not perfect tracking. The goal is directional confidence.

If pipeline is growing, target accounts are engaging, deals exposed to LinkedIn are closing more often, and revenue efficiency is improving — then LinkedIn is likely pulling its weight, even if the platform dashboard doesn’t show a perfect ROAS number.

The mindset shift: stop asking ‘Did this campaign drive a conversion?’ Start asking ‘Is this campaign helping us win more deals?’

What to Measure at Each Stage of a LinkedIn Ads Campaign

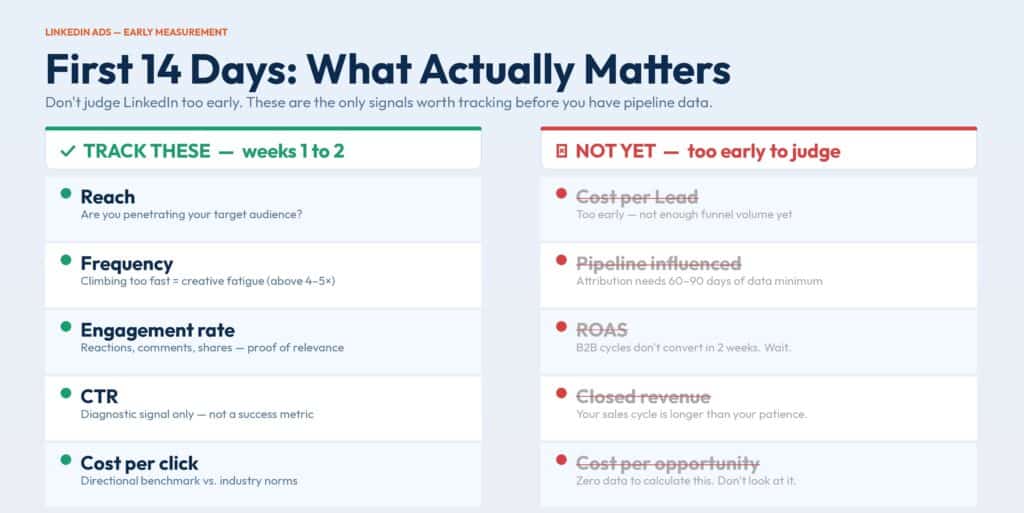

One of the biggest mistakes I see all the time: judging LinkedIn performance too early.

Teams launch a campaign, wait two weeks, look at conversions, and decide it’s either a success or a failure. That’s how you end up killing campaigns that would have driven pipeline, or scaling ones that just got lucky with a few early leads.

LinkedIn doesn’t work on a two-week cycle. It works in stages. And each stage has different signals that actually matter.

What to Measure in the First 14 Days

In the first two weeks, you are not measuring pipeline. You are measuring penetration.

Your goal is simple: are the right people seeing and engaging with your ads?

This is also where benchmark data earns its keep. If your CTR, engagement rate, or cost per click is roughly in line with what we see across campaigns in DemandSense, that’s a solid early validation — it tells you the campaign is in a healthy range before you have any conversion data to lean on.

Good performance at this stage has nothing to do with conversions. It looks more like:

- Solid reach inside your target audience

- Healthy engagement from the right job titles and companies

- Stable or improving CTR

- Frequency that isn’t spiking too fast

Check reach versus your audience size. If you’re targeting 80,000 people and only reaching 5,000 after two weeks, something is off. Budget, bids, or targeting may be too restrictive.

Watch frequency — if it’s climbing fast and engagement is dropping, rotate in new ads. Check engagement quality: are the right roles and companies actually interacting? If most engagement is coming from irrelevant job titles or tiny companies, your targeting needs work.

Use this period to test creatives, adjust targeting, refine messaging, and improve early engagement signals. Do not use it to decide whether LinkedIn ‘works’ for your business.

Think of the first few weeks as a calibration phase. The real business impact comes later.

What to Measure After 30–60 Days

Around the one- to two-month mark, you should start seeing early pipeline signals. Not necessarily closed deals, but at least: qualified leads, sales conversations, opportunities forming, movement into MQL and SQL stages.

This is when conversion data starts to matter — but it still needs context.

Lead quality first. Are you getting the right seniority levels, the right job functions, the right company sizes? Ten leads from junior roles at tiny companies is not a win. Three leads from directors at target accounts might be far more valuable.

Track MQL and SQL progression. Are LinkedIn leads moving forward? If they’re coming in but not progressing, figure out why — weak targeting, poor messaging, an offer that doesn’t resonate, or a broken sales follow-up process.

This is also a good time to sanity-check your conversion data. Compare platform-reported conversions against CRM opportunities and self-reported attribution from forms. If multiple signals point in the same direction, you can start building real confidence.

By this stage, look for early scalability signals: stable or improving cost per qualified lead, growing engagement from target accounts, opportunities forming at a predictable rate.

What to Measure After 90+ Days

After about three months, you finally have enough data to evaluate LinkedIn ads properly. This is where you shift from lead metrics to business impact.

Start by looking at pipeline sourced from LinkedIn, pipeline influenced by LinkedIn, close rates of LinkedIn-exposed accounts, and overall revenue efficiency.

Influenced pipeline matters more than most teams realize. Most deals won’t originate from a LinkedIn form — they’ll come through organic search, direct traffic, or outbound. But if those accounts were exposed to your ads during the buying journey, LinkedIn played a role. Don’t let it go unaccounted.

Look at deal velocity. Are LinkedIn-exposed accounts moving through the pipeline faster? Closing at higher rates? If so, LinkedIn is shaping demand, not just collecting leads.

At this stage, you can make real strategic decisions: scale budgets on campaigns driving strong pipeline, pause or rework underperforming segments, reposition offers that aren’t converting into real opportunities.

This is the point where ROI discussions actually make sense. Before 90 days, you’re mostly guessing. After 90 days, you have enough data to make confident calls.

That’s the timeline most teams get wrong. They try to make 90-day decisions with 14 days of data, and it leads to bad calls.

How to Validate LinkedIn Ads Impact Without Chasing Perfect Attribution

If you wait for perfect attribution on LinkedIn, you’ll be waiting forever — and I mean that literally.

B2B buying journeys involve multiple people, multiple channels, and sales cycles that stretch across quarters. No dashboard stitches that together cleanly. The goal isn’t perfect attribution. The goal is directional confidence — enough signals to say ‘yes, LinkedIn is helping us build pipeline’ or ‘no, this isn’t moving the needle.’

Here’s how to get there in practice.

Compare Attribution Windows Instead of Trusting Defaults

Most teams leave the default attribution window and never question it. That’s a mistake.

Compare: one-day click, seven-day click, 28-day click, view-through windows. If a big chunk of your conversions only show up in longer windows, LinkedIn is influencing decisions over time — not driving instant form fills. The point isn’t to find the ‘right’ window. It’s to understand how your buyers actually behave. Different products, price points, and sales cycles will produce different patterns.

Use Self-Reported Attribution as a Reality Check

Add a required field to your main conversion forms: ‘How did you hear about us?’ Then actually look at the answers.

You’ll often see: LinkedIn, saw your posts, your ads, your content on LinkedIn — even when the platform dashboard shows zero conversions. This is your reality check. If buyers consistently mention LinkedIn, the channel is influencing decisions regardless of what last-click attribution says.

Match LinkedIn Exposure with CRM Opportunity Data

Pull a list of accounts that were exposed to LinkedIn ads, then cross-reference with accounts that entered the pipeline during the same period. Are a large portion of your opportunities coming from companies that saw or engaged with your ads? If so, LinkedIn is influencing pipeline — even if it didn’t get the last click.

This is where pipeline influenced becomes more useful than pipeline sourced. Sourced deals are only part of the story. Influenced deals usually give a more honest view of LinkedIn’s role.

Run Lift Analysis: Before vs After, Exposed vs Non-Exposed

Before vs after: Look at pipeline creation before LinkedIn campaigns launched, then compare to pipeline after LinkedIn has been running for a few months. If overall pipeline volume or conversion rates improve, LinkedIn is likely contributing.

Exposed vs non-exposed: Split accounts into two groups — those exposed to LinkedIn ads and those that weren’t. Compare meeting rates, opportunity creation, and close rates. If exposed accounts consistently outperform, that’s a strong signal.

Why Validation Beats Attribution Perfection

Attribution asks: ‘Which channel gets the credit?’ Validation asks: ‘Is this channel helping us win more deals?’ In complex B2B environments, the second question is far more useful.

If self-reported data points to LinkedIn, target accounts are engaging, exposed accounts convert at higher rates, and pipeline grows after campaigns launch — you don’t need a perfect ROAS number. You already have enough evidence.

Stop chasing perfect attribution. Start looking for consistent signals.

Common LinkedIn Ads Measurement Mistakes That Kill Good Campaigns

Most LinkedIn campaigns don’t fail because of bad targeting or weak creative. They fail because they get judged with the wrong metrics at the wrong time.

The most common one: killing campaigns before the buying cycle plays out. Teams look at two or three weeks of data, see no conversions, and shut everything down. In B2B, the sales cycle can take months. You’re pulling the plug before the ads even have a chance to influence pipeline.

Second mistake: optimizing for clicks instead of influence. LinkedIn is not a high-CTR platform. People see your ads, remember your brand, and come back later through search, direct, or referrals. If you only value the click, you miss most of the impact.

Third: treating CTR or CPL as success metrics. A cheap lead from the wrong company is still a bad lead. High CTR does not pay the bills. Pipeline quality and revenue matter a lot more than surface-level efficiency metrics.

Then there’s confusing activity with progress. More leads, more clicks, more impressions — none of that guarantees business results. If those leads never become opportunities, you’re just creating busywork for the sales team.

And finally: trusting a single dashboard without context. LinkedIn, GA4, and your CRM will all tell slightly different stories. That’s normal. If you only look at one source, you’ll make decisions based on incomplete data. Always connect platform metrics to pipeline and revenue.

A Practical Measurement Philosophy for LinkedIn Ads

If you approach LinkedIn like a pure direct response channel, you’re going to misread the data and make bad calls.

LinkedIn is an influence channel first. Most buyers don’t click a LinkedIn ad and book a demo on the spot. They see your message, recognize your brand later, search for you, or respond to an outbound email. LinkedIn shapes the buying conversation long before the form fill happens.

Conversions confirm impact — they don’t define it. The real work usually happens earlier through impressions, engagement, and repeated exposure. If you only look at the final click, you ignore most of the journey that led to it.

Measure momentum before outcomes. In the early weeks, focus on signals like reach, engagement, and matched audience activity. Those tell you if the campaign is gaining traction. Pipeline and revenue come later. You have to build momentum before you expect outcomes.

Make decisions based on patterns, not snapshots. One bad week does not mean the campaign is failing. One good spike does not mean it’s a winner. Look at trends over 30, 60, or 90 days. When you see consistent movement in the right direction, that’s when you scale.

How to Measure LinkedIn Ads Performance with Confidence

If you remember one thing from this: LinkedIn performance happens in layers.

Early on, you’re looking at delivery and engagement. After a few weeks, you watch for pipeline signals. Over time, you judge the channel based on influenced revenue and overall business impact. When you respect those stages, the data stops being confusing and starts telling a clear story — and you stop making reactive decisions based on two weeks of incomplete data.

We built DemandSense specifically for this. Instead of guessing whether your early metrics are ‘good’ or ‘bad,’ you can benchmark them against real LinkedIn campaign data from accounts across industries and budget ranges — and know whether you’re actually on track. If that kind of clarity sounds useful, start a free trial and see how your campaigns stack up.

LinkedIn is not a quick-win channel. It’s an influence engine. The marketers who treat it that way, measure it in phases, and resist the urge to pull the plug too early are the ones who end up with a channel that compounds over time.

If you want a team to build the strategy, reporting, and optimization plan alongside you, book a strategy call with Impactable — we’ll walk you through the exact next steps.