Most marketers love chasing “industry averages.”

You’ve seen the numbers: LinkedIn CTR usually sits somewhere between 0.4% and 0.6%. Cool stat, but it’s meaningless without context.

Good for who? A cold audience seeing your brand for the first time? A retargeting campaign hitting users who already booked a demo? The benchmark doesn’t tell you any of that.

CTR only matters when you connect it to funnel stage, ad format, and audience warmth. That’s where the real story starts.

We pulled data from hundreds of SaaS campaigns to show what “good” actually looks like. You’ll see how CTR changes from cold to warm audiences, which ad formats pull the most attention, and how top-performing accounts keep engagement high without wasting budget.

CTR isn’t just a vanity metric. It influences your CPC, your relevance score, and how much of your spend turns into real pipeline.

Let’s dig in.

What CTR Really Measures (and Why It’s Overrated Alone)

CTR is simple math: clicks divided by impressions, multiplied by 100.

It tells you how many people clicked after seeing your ad. Straightforward. But here’s where most marketers mess up. They treat CTR like a scoreboard.

High CTR Doesn’t Always Mean Success

A high CTR doesn’t automatically mean your campaign is winning. If you’re targeting the wrong audience, all those clicks just drain your budget faster.

Low CTR Isn’t Always a Problem

A lower CTR on a top-of-funnel awareness campaign isn’t a failure. Sometimes the goal is exposure, not clicks.What CTR actually measures is relevance. The more your ad resonates with your audience, the higher your CTR climbs. LinkedIn’s algorithm rewards that engagement with cheaper CPCs and better placements.

Improving CTR can reduce cost, but only if it comes from reaching the right audience with the right message.

Use CTR as a signal, not a finish line. It points you toward what’s working, but the real performance lives deeper in the funnel.

The Real LinkedIn CTR Benchmarks (Not the Fluffy Averages)

Most “average CTR” reports blend every campaign together. That’s useless if you’re trying to judge your own performance.

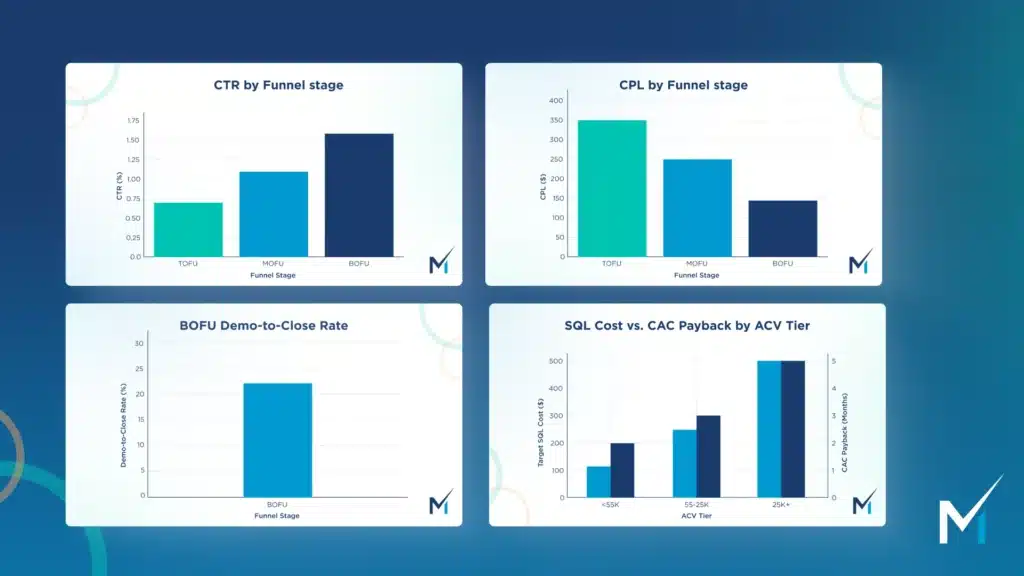

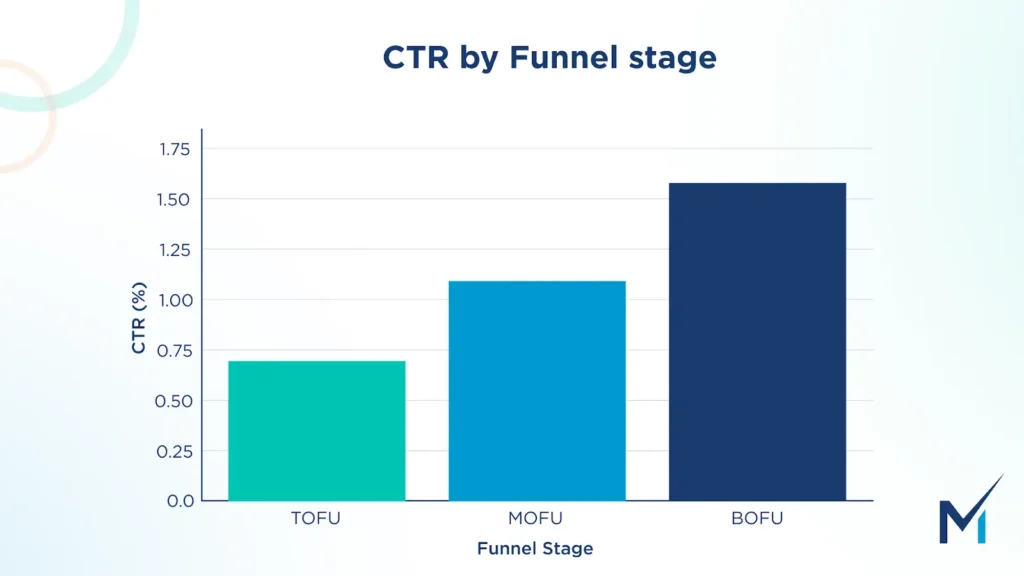

Here’s what our data actually shows across hundreds of SaaS accounts:

Top of Funnel (TOFU)

CTR: 0.30–0.55%

Formats that win here are video and document ads, anything that educates and builds trust.

Middle of Funnel (MOFU)

CTR: 0.55–0.80%

Think carousel ads with data or insights, or lead gen forms tied to gated content. These audiences already know your brand, so engagement naturally climbs.

Bottom of Funnel (BOFU)

CTR: 0.80–1.3%

This is where conversation ads and retargeting campaigns dominate. The audience is smaller but warmer, and intent is much higher.

These ranges shift because intent, audience overlap, and creative type all change as people move through the funnel. A cold video ad won’t pull the same CTR as a retargeting form ad, and it shouldn’t.

Benchmarks only make sense when they’re tied to context. Compare performance by funnel stage, not against some random platform-wide average.

Ad Format Benchmarks: Which Creative Type Actually Pulls Clicks

Not every ad should be judged by the same CTR. Different formats serve different purposes, and the numbers prove it.

Here’s how they stack up from our campaign data:

Top Performers

- Carousel Ads: Great for storytelling and quick takeaways. CTRs often hit 0.8–1.1%.

- Document Ads: Deliver strong engagement when you lead with value. CTR range: 0.7–1%.

- Video Ads: Best for awareness and pattern interruption. CTR range: 0.5–0.8%.

Mid Tier

- Single Image Ads: Dependable workhorses, solid performance but not explosive. CTR range: 0.4–0.6%.

- Conversation Ads: Hit harder for warm audiences. CTR looks modest but conversion rates are higher.

- Lead Gen Forms: Lower CTRs than carousels, but they close better when targeting the right list.

Low CTR but High Intent

- Text Ads and Message Ads: Clicks are fewer, but those who engage are usually ready to buy.

Some ad types sacrifice CTR for better conversion quality. Message Ads, for example, rarely pull impressive CTRs but can drive top-tier leads when used for retargeting or event invites.

The point isn’t to chase the highest CTR. It’s to match creative format with intent.

Use the right creative for the right goal. Not every click is equal.

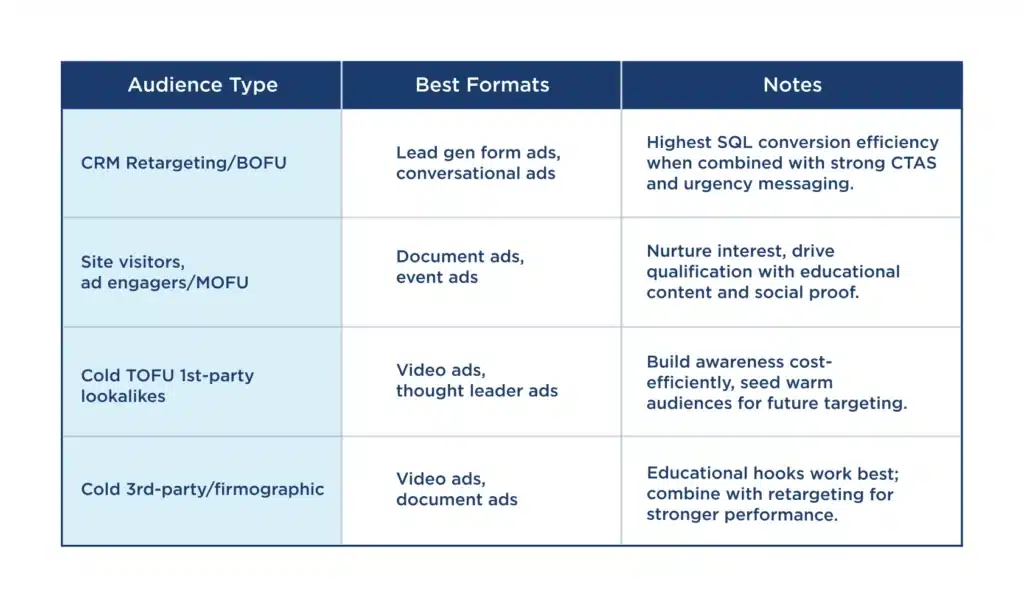

Creative + Audience Fit = CTR Multiplier

Your creative only works if it matches the audience you’re showing it to. The tighter the fit, the stronger the engagement.

From our benchmark data, campaigns that align creative type with audience temperature consistently outperform the rest.

Here’s how it plays out in practice:

Cold Audiences

Use video or document ads that educate and build trust. These people are meeting your brand for the first time, so focus on awareness, not conversion.

Warm Audiences

Go with carousel ads or demo-focused creatives. They already know who you are, so give them proof, stats, or customer stories to move them closer to action.

Retargeting Audiences

Run personalized lead gen or conversation ads. They’re already in your funnel, and a clear offer or direct CTA can convert them faster than another awareness ad.

When creative and audience alignment scores above 80, CTR often doubles compared to mismatched campaigns.

The takeaway is simple. Stop recycling the same ad across every audience. Match the creative to where people are in their buying cycle, and your clicks will follow.

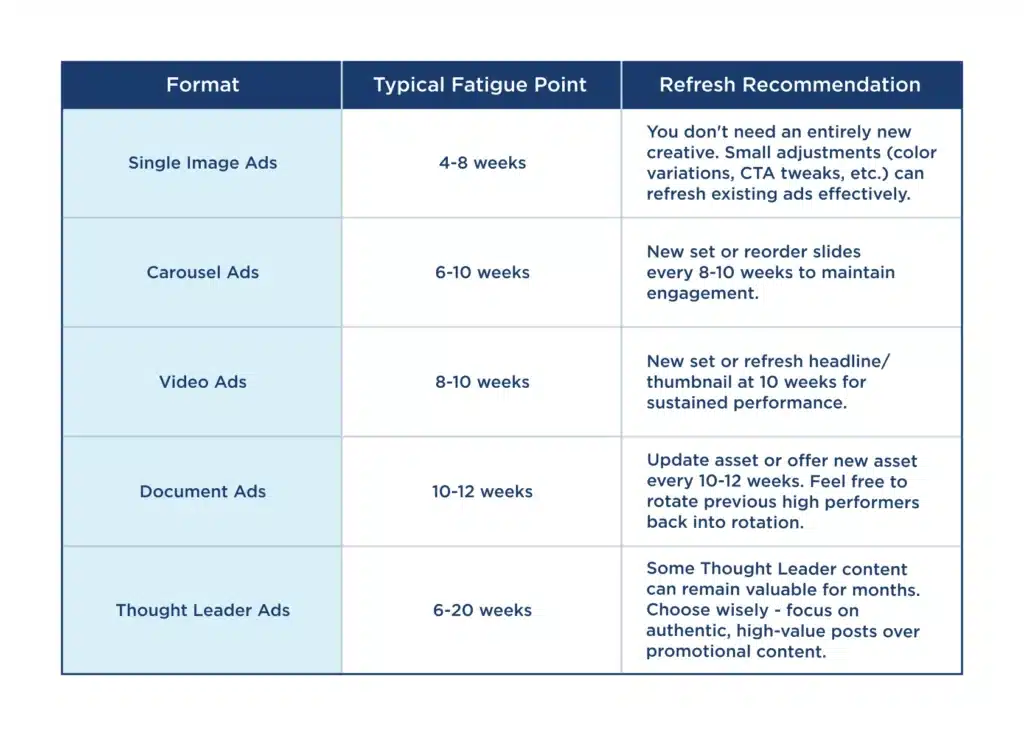

Ad Fatigue & Refresh Benchmarks

Even great creative has a shelf life. Every campaign hits a point where performance starts sliding because people have seen the ad too many times.

Our data shows that CTR usually drops 35–50% after week three or four if the ad isn’t refreshed. That decline hits faster for static formats and slower for interactive ones, but it always happens.

Recommended Refresh Cadence

- Video or Document Ads: every 3–4 weeks

- Static or Carousel Ads: every 2–3 weeks

- Lead Gen or Conversation Ads: every 4–5 weeks

The goal isn’t to swap creative the second performance dips. Track your fatigue trendlines, spot when CTR begins to flatten, and plan your next iteration before the drop turns into waste.

Refreshing on time keeps your ads competitive and your budget working where it should, on audiences that still pay attention.

How to Actually Improve CTR (the Smart Way)

CTR improves when your testing and creative process are built around data, not gut feeling. Here’s the system that works best across B2B campaigns we manage.

- Start broad, refine fast.

Test multiple audience segments early, then double down where CTR climbs. The best data comes from variation, not over-optimization too soon. - A/B test creative types weekly.

Change one variable at a time, headline, image, or CTA and keep what statistically wins. Small creative shifts often make the biggest difference. - Sync creative cadence with audience cycles.

Retargeting, cold, and in-market audiences don’t fatigue at the same rate. Plan new creative drops based on how long each audience stays active. - Optimize for scroll-stoppers, not clicks.

Motion, contrast, first-line hooks, and chart visuals stop the thumb. Focus on content that earns attention before you chase conversions. - Measure CTR next to conversion metrics.

High CTR means nothing if the leads are unqualified. Always check cost per lead and conversion rate before celebrating a click spike.

Improving CTR is about rhythm, testing, and context. The goal isn’t more clicks, it’s smarter engagement that actually drives revenue.

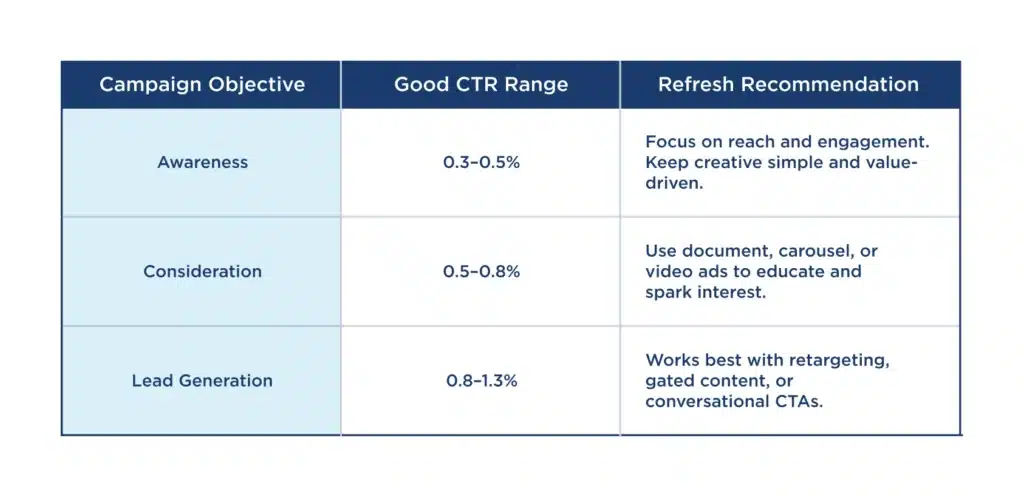

What “Good CTR” Means by Campaign Goal

A “good” CTR only makes sense when you tie it to the campaign’s goal. Each objective measures success differently, so the right benchmark depends on what you’re trying to achieve.

Good CTR is contextual. Compare results within the same campaign goal, not across them. A 0.4% CTR on a cold awareness ad can outperform a 1% CTR lead-gen ad when you look at total impact and efficiency.

What matters most is that your CTR aligns with your intent and the step of the funnel you’re targeting.

When to Stop Obsessing Over CTR

There’s a point where chasing higher CTR stops paying off. Once you pass the 1–1.2% range, you usually start seeing diminishing returns. That kind of spike often means your targeting is too narrow or your ad is click-bait heavy. Both drive cheap clicks, not pipeline.

The next layer of metrics tells the real story. Look at:

- Cost per Lead (CPL)

- Sales Qualified Lead percentage (SQL%)

- Cost per Pipeline or Opportunity

These numbers show if your clicks are turning into real opportunities or just vanity traffic.

In our own data, Impactable campaigns with 0.7% CTR often delivered lower CPL than those with 1.2% CTR. The ads with fewer but higher-quality clicks brought in stronger leads and better revenue outcomes.

CTR is a pulse check, not the goal. It tells you if your audience cares enough to click, but not if they’re the right people to convert.

Summary / Key Takeaways

CTR is a reflection, not a goal. It shows how well your message connects, not how successful your campaign is.

The best results come when your creative, audience, and funnel intent all align. Each layer supports the other. A mismatch in any of them drags your numbers down.

Keep your ads fresh. Creative fatigue hits faster than most marketers expect, and refreshing regularly protects performance.

And finally, measure CTR in context. Look at it alongside CPC, CPL, and conversion metrics. The combination of those tells you if your spend is turning into revenue or just clicks.

Treat CTR as the health indicator it is. When it rises for the right reasons, everything else in your funnel benefits.

If you want to know where your campaigns actually stand, we can help.

At Impactable, we build full-funnel LinkedIn reporting dashboards and creative testing systems that show which ads drive real ROI, not just clicks.

Book a free creative audit and get benchmarks pulled from your own data.

Need Help With Your LinkedIn Ads?

If you want expert eyes on your campaigns or you’re not sure where performance is slipping, we’ve got you.At Impactable, we build and manage full-funnel LinkedIn campaigns that focus on real pipeline growth, not vanity metrics.

Book a free call and see where your LinkedIn ads can perform better.